March 12, 2016

by Andrey Filippov

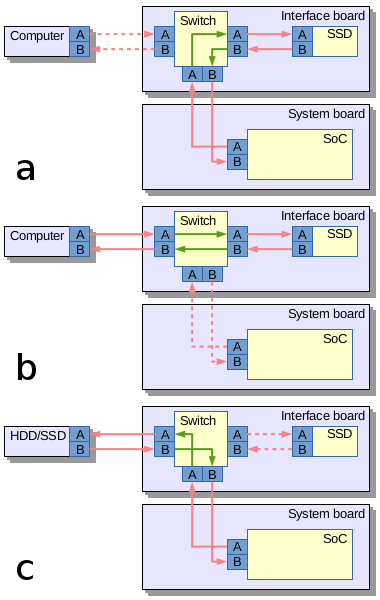

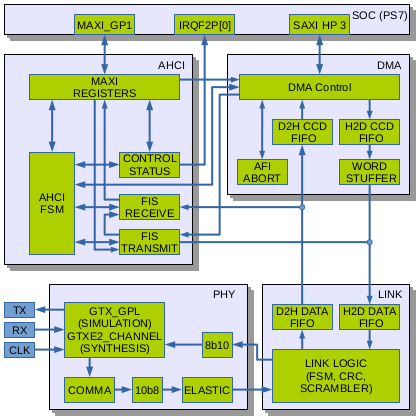

Implementation includes AHCI SATA host adapter in Verilog under GNU GPLv3+ and a software driver for GNU/Linux running on Xilinx Zynq. Complete project is simulated with Icarus Verilog, no encrypted modules are required.

This concludes the last major FPGA development step in our race against finished camera parts and boards already arriving to Elphel facility before the NC393 can be shipped to our customers.

Fig. 1. AHCI Host Adapter block diagram

Why did we need SATA?

Elphel cameras started as network cameras – devices attached to and controlled over the Ethernet, the previous generations used 100Mbps connection (limited by the SoC hardware), and NC393 uses GigE. But this bandwidth is still not sufficient as many camera applications require high image quality (compared to “raw”) without compression artifacts that are always present (even if not noticeable by the human viewer) with the video codecs. Recording video/images to some storage media is definitely an option and we used it in the older camera too, but the SoC IDE controller limited the recording speed to just 16MB/s. It was about twice more than the 100Mb/s network, but still was a bottleneck for the system in many cases. The NC393 can generate 12 times the pixel rate (4 simultaneous channels instead of a single one, each running 3 times faster) of the NC353 so we need 200MB/s recording speed to keep the same compression quality at the increased maximal frame rate, higher recording rate that the modern SSD are capable of is very desirable.

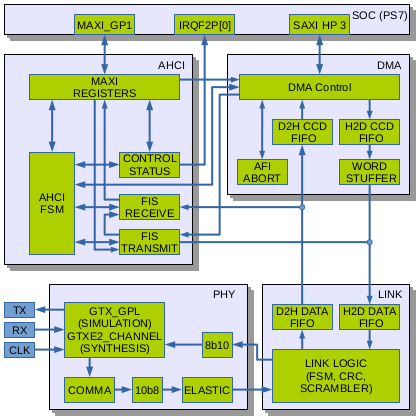

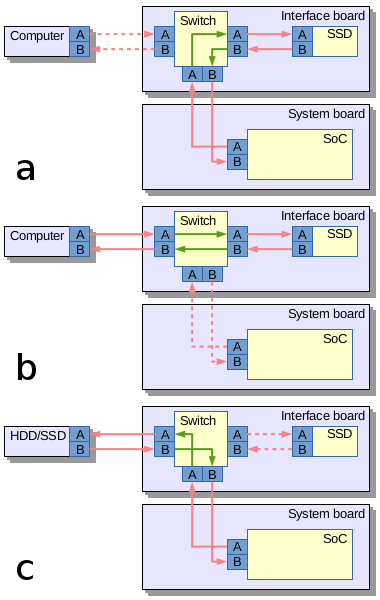

Fig.2. SATA routing: a) Camera records data to the internal SSD; b) Host computer connects directly to the internal SSD; c) Camera records to the external mass storage device

The most universal ways to attach mass storage device to the camera would be USB, SATA and PCIe. USB-2 is too slow, USB-3 is not available in Xilinx Zynq that we use. So what remains are SATA and PCIe. Both interfaces are possible to implement in Zynq, but PCIe (being faster as it uses multiple lanes) is good for the internal storage while SATA (in the form of eSATA) can be used to connect external storage devices too. We may consider adding PCIe capability to boost recording speed, but for initial implementation the SATA seems to be more universal, especially when using a trick we tested in Eyesis series of cameras for fast unloading of the recorded data.

Routing SATA in the camera

It is a solution similar to USB On-The-Go (similar term for SATA is used for unrelated devices), where the same connector is used to interface a smartphone to the host PC (PC is a host, a smartphone – a device) and to connect a keyboard or other device when a phone becomes a host. In contrast to the USB cables the eSATA ones always had identical connectors on both ends so nothing prevented to physically link two computers or two external drives together. As eSATA does not carry power it is safe to do, but nothing will work – two computers will not talk to each other and the storage devices will not be able to copy data between them. One of the reasons is that two signal pairs in SATA cable are uni-directional – pair A is output for the host and input for device, pair B – the opposite.

Camera uses Vitesse (now Microsemi) VSC3304 crosspoint switch (Eyesis uses larger VSC3312) that has a very useful feature – it has reversible I/O ports, so the same physical pins can be configured as inputs or outputs, making it possible to use a single eSATA connector in both host and device mode. Additionally VSC3304 allows to change the output signal level (eSATA requires higher swing than the internal SATA) and perform analog signal correction on both inputs and outputs facilitating maintaining signal integrity between attached SATA devices.

Aren’t SATA implementations for Xilinx Zynq already available?

Yes and no. When starting the NC393 development I contacted Ashwin Mendon who already had SATA-2 working on Xilinx Virtex. The code is available on OpenCores under GNU GPL license. There is an article published by IEEE . The article turned out to be very useful for our work, but the code itself had to be mostly re-written – it was still for different hardware and were not able to simulate the core as it depends on Xilinx proprietary encrypted primitives – a feature not compatible with the free software simulators we use.

Other implementations we could find (including complete commercial solution for Xilinx Zynq) have licenses not compatible with the GNU GPLv3+, and as the FPGA code is “compiled” to a single “binary” (bitstream file) it is not possible to mix free and proprietary code in the same design.

(more…)

February 11, 2016

by Olga Filippova

The components for 10393 and other related circuit boards for the new NC393 camera series have been ordered and contract manufacturing (CM) is ready to assemble the first batch of camera boards.

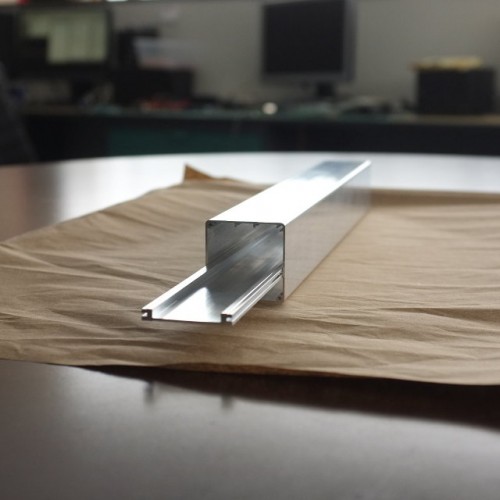

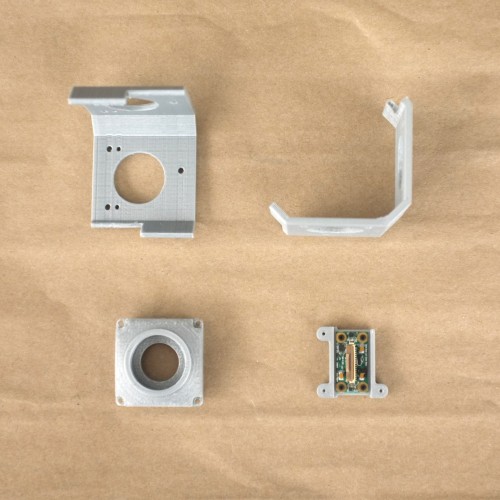

In the meantime, the extruded parts that will be made into NC393 camera body have been received at Elphel. The extrusion looks very slick with thin, 1mm walls made out of strong 6061-T6 aluminium, and weighs only 55g. The camera’s new lightweight design is suitable for use on a small aircraft. The heat frame responsible for cooling the powerful processor has also been extruded.

We are very pleased with the performance of Profile Precision Extrusions located in Phoenix, Arizona, which have delivered a very accurate product ahead of the proposed schedule. Now we can proudly engrave “Made in USA” on the camera, as now even the camera body parts are made in the United States.

Of course, we have tried to order the extrusion in China, but the intricately detailed profile is difficult to extrude and tolerances were hard to match, so when Profile Precision was recommended to us by local extrusion facilities we were happy to discover the outstanding quality this company offers.

While waiting for the extruded parts we have been playing with another new toy: the 3D printer. We have been creating prototypes of various camera models of the NC393 series. The cameras are designed and modelled in a 3D virtual environment, and can viewed and even taken apart by mouse click thanks to X3dom technology. The next step is to build actual parts on the 3D printer and physically assemble the camera prototypes, which will allow us to start using the prototypes in the physical world: finding what features are missing, and correcting and finalizing the design. For example, when the mini-panoramic NC393-4PI4 camera prototype was assembled it was clear that it needs the 4 fins (now seen on the final model) to protect the lenses from touching the surfaces as well as to provide shade from the sun. NC393-4PI4 and NC393-4PI4-IMU-GPS are small 360 degree panoramic cameras assembled with 4 fish-eye lenses especially suitable for interior panoramic applications.

The prototypes are not as slick as the actual aluminium bodies, but they give a very good example of what the actual cameras will look like.

As of today, the 10393 and other boards are in production, the prototypes are being built and tested for design functionality, and the aluminium extrusions have been received. With all this taken care of, we are now less than one month away from the NC393 being offered for sale; the first cameras will be distributed to the loyal Elphel customers who have placed and pre-paid orders several weeks ago.

November 12, 2015

by Andrey Filippov

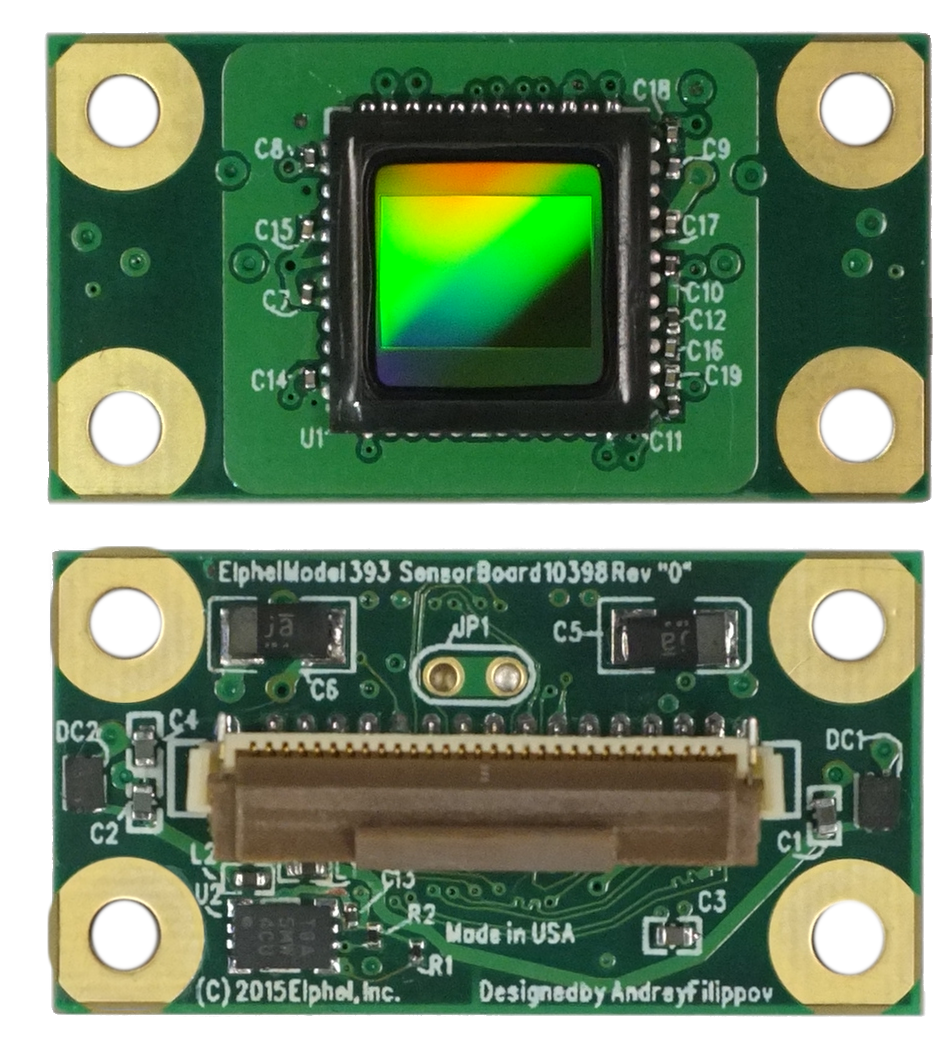

Sensors (ON Semiconductor MT9F002) and blank PCBs arrived in time and so I was able to hand-assemble two 10398 boards and start testing them. I had some minor problems getting data output from the first board, but it turned out to be just my bad soldering of the sensor, the second board worked immediately. To my surprise I did not have any problems with HiSPi decoder that I simulated using the sensor model I wrote myself from the documentation, so the color bar test pattern appeared almost immediately, followed by the real acquired images. I kept most of the sensor settings unmodified from the default values, just selected the correct PLL multiplier, output signal levels (1.8V HiVCM – compatible with the FPGA) and packetized format, the only other registers I had to adjust manually were exposure and color analog gains.

As it was reasonable to expect, sensitivity of the 14MPix sensor is lower than that of the 5MPix MT9P006 – our initial estimate is that it is 4 times lower, but this needs more careful measurements to find out exposure required for pixel saturation with the same illumination. Analog channel gains for both sensors we set slightly higher than minimal ones for the saturation, but such rough measurements could easily miss a factor of 1.5. MT9F002 offers more controls over the signal chain gains, but any (even analog) gain in the chain that boosts signal above the minimal needed for saturation proportionally reduces used “well capacity”, while I expect the Full Well Capacity (FWC) is already not very high for the 1.4μm x1.4 μm pixel sensor. And decrease in the number of electrons stored in a pixel accordingly increases the relative shot noise that reveals itself in the highlight areas. We will need to accurately measure FWC of the MT9F002 and have better sensitivity comparison, including that of the binned mode, but I expect to find out that 5MPix sensor are not obsolete yet and for some applications may still have advantages over the newer sensors.

(more…)

November 4, 2015

by Andrey Filippov

All the PCBs for the new camera: 10393, 10389 and 10385 are modified to rev “A”, we already received the new boards from the factory and now are waiting for the first production batch to be build. The PCB changes are minor, just moving connectors away from the board edge to simplify mechanical design and improve thermal contact of the heat sink plate to the camera body. Additionally the 10389A got m2 connector instead of the mSATA to accommodate modern SSD.

While waiting for the production we designed a new sensor board (10398) that has exactly the same dimensions, same image sensor format as the current 10338E and so it is compatible with the hardware for the calibrated sensor front ends we use in photogrammetric cameras. The difference is that this MT9F002 is a 14 MPix device and has high-speed serial interface instead of the legacy parallel one. We expect to get the new boards and the sensors next week and will immediately start working with this new hardware.

(more…)

September 18, 2015

by Andrey Filippov

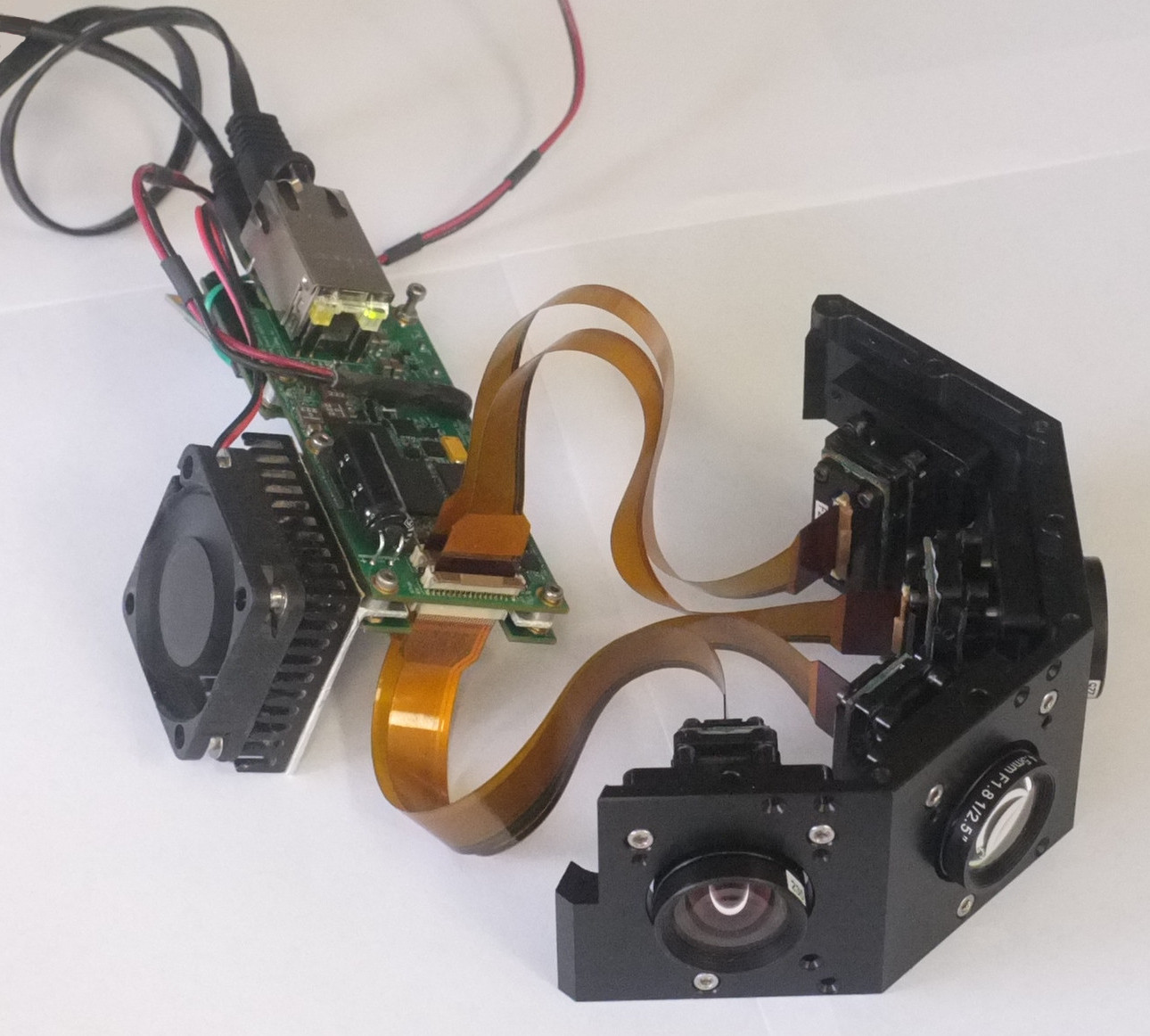

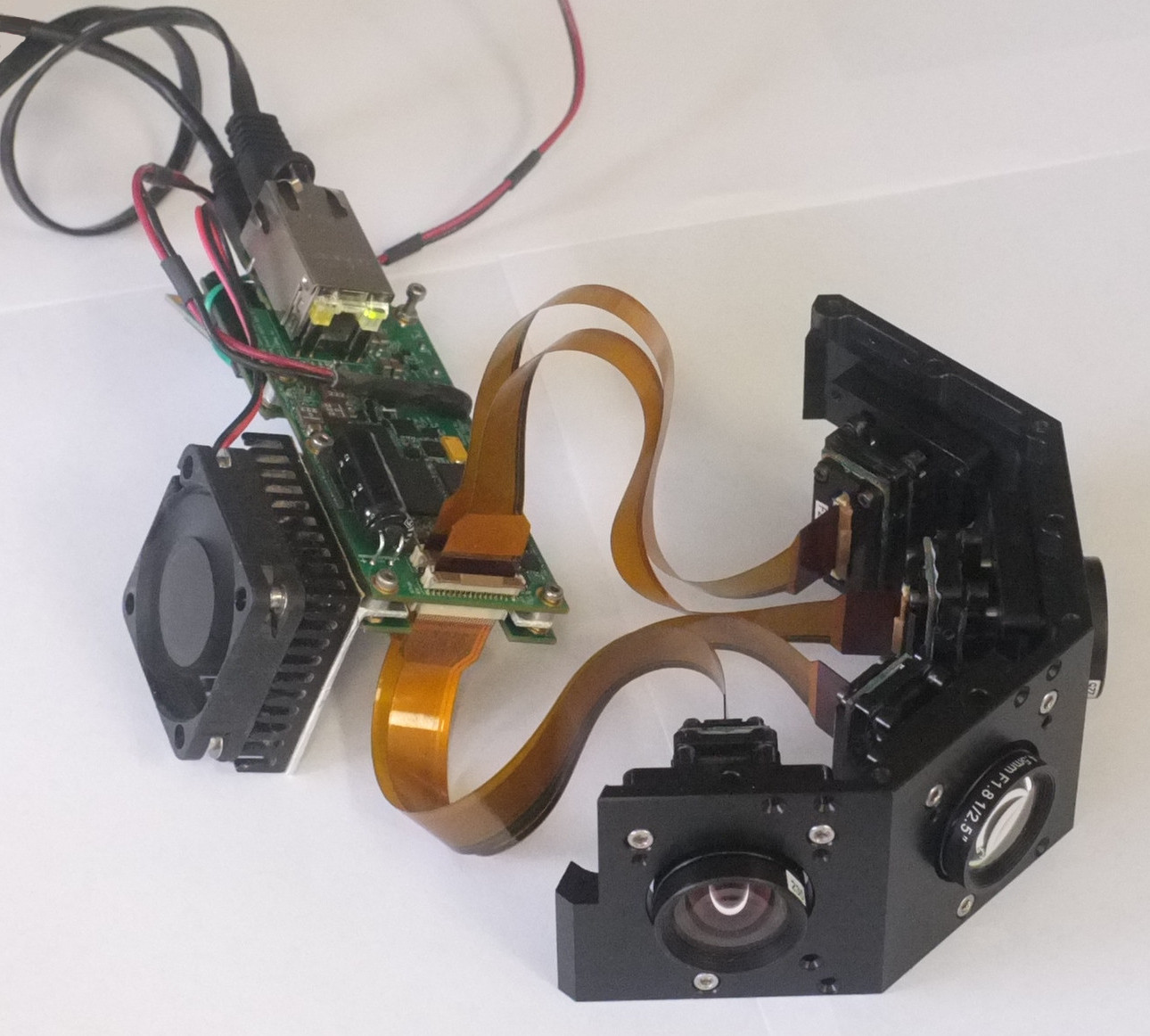

10393 with 4 image sensors

Finally all the parts of the NC393 prototype are tested and we now can make the

circuit diagram, parts list and PCB layout of this board public. About the half of the board components were tested immediately when the prototype was built –

it was almost two years ago – those tests did not require any FPGA code, just the initial software that was mostly already available from the distributions for the other boards based on the same Xilinx Zynq SoC. The only missing parts were the GPL-licensed

initial bootloader and

a few device drivers.

(more…)

July 29, 2015

by Andrey Filippov

Another update on the development of the NC393 camera: finished adding FPGA code that re-implements functionality of the NC353 camera (just with additional multi-sensor capability), including JPEG/JP4 compressors, IMU/GPS logger and inter-camera synchronization. Next step – simulation and debugging, and it will use co-simulating of the same sensor image data using the code of the existing NC353 camera. This involves updating of that camera code to the state compatible with the development tools we use, and so the additional sub-project was spawned.

(more…)

July 9, 2015

by Alexey

Widespread high-speed protocols, which are based on serial interfaces, have become easier and easier to implement on FPGAs. If you take a look at Xilinx’s chips series, you can monitor an evolution of embedded transceivers from some awkwardly inflexible models to much more compatible ones. Nowadays even the affordable 7 series FPGAs possess GTX transceivers. Basically, they represent a unification of various protocols phy-levels, where the versatility is provided by parameters and control input signals.

The problem is, for some reason GTX’s simulation model is a secured IP block. It means that without proprietary software it’s impossible to compile and simulate the transceiver. Moreover, we use Icarus Verilog for these purposes, which doesn’t provide deciphering capabilities for now, and doesn’t seem to ever be able to do so: http://sourceforge.net/p/iverilog/feature-requests/35/

Still, our NC393 camera has to use GTX as a part of SATA host controller design. That’s why it was decided to create a small simulation model, which shall behave as GTX, at least within some limitation and assumption. This was done so that we could create a full-fledged non-synthesizable verification environment and provide our customers with a universal within simulation purposes solution.

(more…)

June 9, 2015

by Andrey Filippov

Quick update: a new chunk of code is added to the NC393 camera FPGA project. It is a second (of three needed to match the existing NC353 functionality) major parts of the system after the memory controller is finished. This code is just written, it still has to be verified by the simulation first, and then by synthesizing and by running it on the actual hardware. We plan to do that when the third part – image compressors will be ported to the new system too. The added code deals with receiving data from the image sensors and pre-processing it before storing in the video memory. FPGA-based systems are very flexible and many other configurations like support of multi-lane serial interface sensors or using several camera ports to connect a single large high-speed sensor are possible and will be implemented later. The table below summarizes parameters of the current code only.

Table 1. NC393 Sensor Connections and Pre-processing

| Feature |

Value |

|---|

| Number of sensor ports |

4 |

| Total number of multiplexed sensors |

16 |

| Total number of multiplexed sensors with existing 10359 multiplexer board |

12 |

| Sensor interface type (implemented in HDL) |

parallel 12 bits |

| Sensor interface hardware compatibility |

parallel LVCMOS/serial differential 8 lanes + clock |

| Sensor interface voltage levels |

programmable up to 3.3V |

| Number of I²C sequencers |

4 (1 per port) |

| Number of I²C sequencers frames |

16 |

| Number of I²C sequencers commands per frame |

64 |

| I²C sequencers commands data width |

16/8 bits |

| Image data width stored |

16/8 bits per pixel |

| Gamma conversion regions per port |

4 |

| Histograms: number of rectangular ROI (Regions of Interest) per port |

4 |

| Histograms: number of color channels |

4 |

| Histograms: number of bins per color |

256 |

| Histograms: width per bin |

18 or 32 bits |

| Histograms: number of histograms stored per sensor |

16 |

May 9, 2015

by Andrey Filippov

Development of the NC393 camera has just passed an important milestone – we completed HDL code that constitutes the core of this new camera, tested most of the Zynq-specific features that were not available in the older Spartan-3 FPGA used in our current NC353 devices. Next development phase will involve porting some of the existing code that deals with sensor interfacing, gamma correction, histograms, color conversion and JPEG/JP4 compression – code that was tested in the thousands of cameras and many billions of processed images, including the applications listed in Wikipedia. New camera is designed primarily for the multisensor applications – up to four connected directly to the system board and more through the multiplexers as we currently do in Eyesis4π cameras. It is the memory controller that had to be redesigned completely, the sensor and compressor channels can reuse most of the existing code and just have 4 instances of the same modules instead of a single one. Starting early this year I’ve got an opportunity to put aside other projects and work full time on the new camera code.

(more…)

April 24, 2015

by Andrey Filippov

Working with the DDR3 Memory interface I was not able to avoid the temptation to investigate more the very useful feature of the modern FPGA devices – individually programmed input/output delay elements on all (or at least many) of its pins. This is needed to both prepare to increase the memory clock frequency and to be able to individually adjust the timing on other pads, such as the sensor ports, especially when switching from the parallel to high speed serial interface of the modern image sensors.

Xilinx Zynq device we are using has both input and output delays on all low-voltage pins used for the memory interface in the camera, but only input ones on the higher voltage range I/O banks. Luckily enough image sensors connected to these banks need just that – data rate to the sensors is much lower than the rate of the data they generate and send to the FPGA.

(more…)

« Previous Page —

Next Page »