by XorA

This article is to detail the typical workflow I use when I am adding a new application recipe to OpenEmbedded from scratch. In this case it will be the open source cloud computing application called eyeos.

During this article reference to the OE wiki especially the styleguide for new recipes is highly recommended.

(more…)

by XorA

This article is to detail the workflow I personally use when I am doing kernel development for devices supported by OE. I find OE very useful for this as I can use it to build the toolchain and ultimately to control my patch tree until I am ready to send the patches upstream.

So I select a kernel which I wish to develop with, in my case this is in recipes/elphel/linux-elphel_git.bb

I first make sure I am starting from clean:

bitbake linux-elphel -c clean

Then take the kernel as far as the configuration stage, this makes sure all patches in the metadata are applied and that the defconfig has been copied to .config and make oldconfig has been run.

bitbake linux-elphel -c configure

Now I switch to another window where I shall be actually editing the code. I change to the temporary working directory of the kernel I am working with. This path below will change depending on kernel version or name. Kernels are always found in the machine workdir so tmp/<machinename>-angstrom-linux-gnueabi/

cd tmp/work/elphel-10373-angstrom-linux-gnueabi/linux-elphel-2.6.31+2.6.32-

rc8+r4+gitr2a97b06f43c616abb203f4c0eb40518c44c8d7fe-r28/

At this point I normally elect to use quilt to temporarily manage my patches so.

quilt new new-feature.patch

And to add files to this patch, I make sure to do this before I make any edits as the diff ends up being the diff from when this is first called to the current state.

quilt add driver/camera/random.c

(more…)

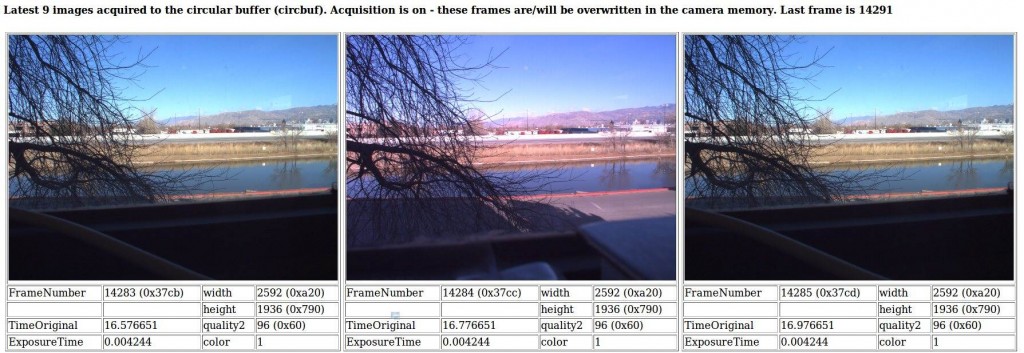

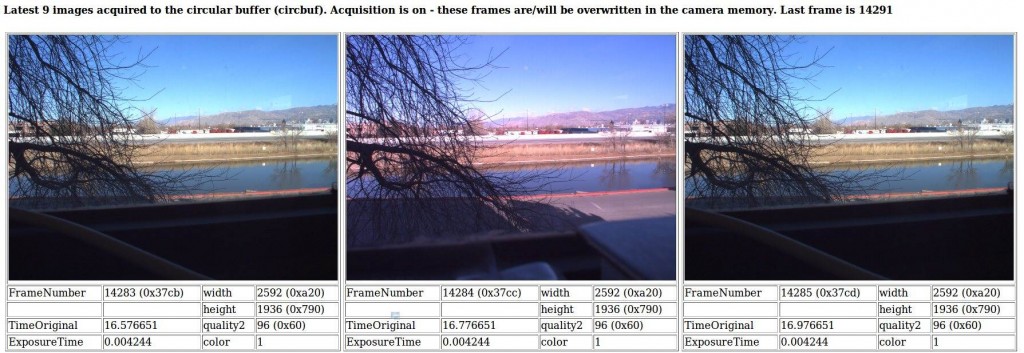

by Oleg Dzhimiev

Rewrote some blocks of the code for the 10359’s fpga and at last found out what was the problem in the alternation mode with buffering – it was the delay between the frames that were sent to the 10353 – it was too small. I used a counter earlier for that but it had 16 bits and that wasn’t enough. I extended it to 32 bits and succeeded with the delay about 220 clk tacts (220x~10ns = ~ 10ms – this is a bit much but I’m not sure – probably missing something in frame generation).

Andreas has recently advised to add a mode where the alternating frames are combined into one to make it easier to say which frame belongs to which sensor. I coded the first version with simple buffering but didn’t test much. Some notes:

- 2 frames are combined vertically.

- the initial (resulting frame) resolution is set from the camera interface (camvc).

- the sensors are programmed to half vertical size and the camvc doesn’t know about it.

I also updated the 10359 interface to switch between these modes and to change other settings.

It is worth mentioning that after ‘automatic phases adjustment’ from 10359’s interface sensors have different color gains in their registers. So, there’s a need to reprogram this parameters after phase adjustments.

What we’ve got now working in the 10359 is:

1. Alternation mode with (or without) buffering in 10359’s DDR SDRAM:

2. Alternation mode with combined buffered frames:

TODO:

1. Make sensors programmed identically after phase adjusment.

2. Add stereo module