by Andrey Filippov

View the results

We had nice New Year vacations (but so short, unfortunately) at Maple Grove Hot Springs, and between soaking in the nice hot pools I tried the emerging technology I never dealt with before – WebGL, a part of HTML-5 standard that gives you the power of the graphic cards 3-d capability in the browser, programed (mostly) in familiar javaScript. I first searched for the existent panoramas to see how they look like and how responsive they are to the mouse controls, but could not immediately find something working (at least on Firefox 4.0b8 that I just installed – you’ll need to enable “webgl.enabled_for_all_sites” in “about:config” if you would like to try it too). Then I found a nice tutorial with the examples working without a glitch and carefully read the first few lessons.

We had nice New Year vacations (but so short, unfortunately) at Maple Grove Hot Springs, and between soaking in the nice hot pools I tried the emerging technology I never dealt with before – WebGL, a part of HTML-5 standard that gives you the power of the graphic cards 3-d capability in the browser, programed (mostly) in familiar javaScript. I first searched for the existent panoramas to see how they look like and how responsive they are to the mouse controls, but could not immediately find something working (at least on Firefox 4.0b8 that I just installed – you’ll need to enable “webgl.enabled_for_all_sites” in “about:config” if you would like to try it too). Then I found a nice tutorial with the examples working without a glitch and carefully read the first few lessons.

(more…)

by Andrey Filippov

UPDATE: The latest version of the page for comparing the results.

This is a quick update to the Zoom in. Now… enhance. – a practical implementation of the aberration measurement and correction in a digital camera post published last month. It had many illustrations of the image post-processing steps, but lacked the most important the real-life examples of the processed images. At that time we just did not have such images, we also had to find out a way to acquire calibration images at the distance that can be considered “infinity” for the lenses – the first images used a shorter distance of just 2.25m between the camera and the target, the target size was limited by the size of our office wall. Since that we improved software combining of the partial calibration images, software was converted to multi-threaded to increase performance (using all the 8 threads in the 4-core Intel i7 CPU resulted in approximately 5.5 times faster processing) and we were able to calibrate the two actual Elphel Eyesis cameras (only 8 lenses around, top fisheye is not done yet). It was possible to apply recent calibration data (here is a set of calibration files for one of the 8 channels) to the images we acquired before the software was finished. (more…)

This is a quick update to the Zoom in. Now… enhance. – a practical implementation of the aberration measurement and correction in a digital camera post published last month. It had many illustrations of the image post-processing steps, but lacked the most important the real-life examples of the processed images. At that time we just did not have such images, we also had to find out a way to acquire calibration images at the distance that can be considered “infinity” for the lenses – the first images used a shorter distance of just 2.25m between the camera and the target, the target size was limited by the size of our office wall. Since that we improved software combining of the partial calibration images, software was converted to multi-threaded to increase performance (using all the 8 threads in the 4-core Intel i7 CPU resulted in approximately 5.5 times faster processing) and we were able to calibrate the two actual Elphel Eyesis cameras (only 8 lenses around, top fisheye is not done yet). It was possible to apply recent calibration data (here is a set of calibration files for one of the 8 channels) to the images we acquired before the software was finished. (more…)

by M@sh

In my search for an affordable and reasonable solution of creating high-res panoramic river views I came along the Elphel camera and it’s photo-finish mode. As part of my ongoing projects Danube Panorama Project, Nile Studies and the new umbrella project River Studies, I am capturing virtually endless panoramic river views from a moving vessels with a slit- or line-scan method. I was using webcams or DV-cameras before, and all possible ways to upgrade to higher resolutions either seemed clumsy (I don’t want to be loaded with much more than a tiny laptop or tablet and the camera itself), very processing intense and/or expensive and far out of reach of my (very limited) budget.

The “all-purpose camera” Elphel stepped in as a good, reasonable and flexible solution for that task. The first season using an Elphel353 is over, so time to share some of my experiences with Elphel and it’s not so well know photo-finish mode for line-scanning.

(more…)

by Andrey Filippov

Deconvolved vs. de-mosaiced original

(more…)

by Stefan de Konink

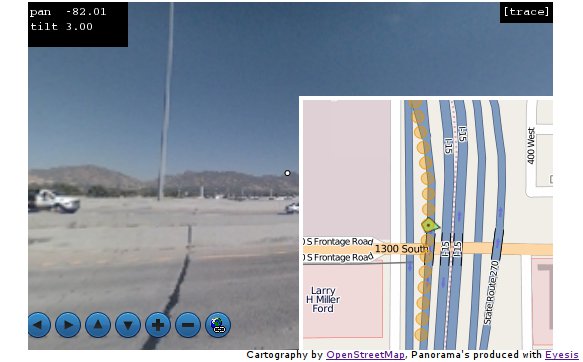

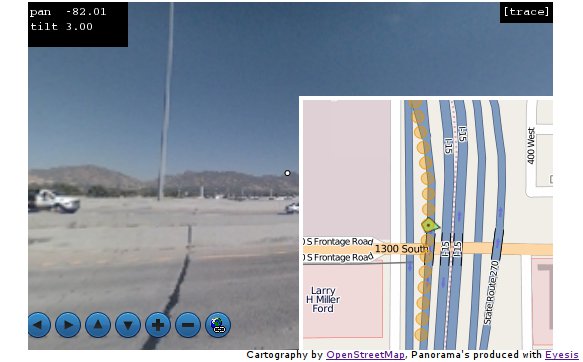

I have made some progress to integrate OpenLayers with EyesisPlayer resulting in EyesisMaps. Marek has added some Javascript callbacks to EyesisPlayer to allow features on OpenLayers to be updated and visa versa. So we gained a moveable arrow and interface directly with a PostgreSQL database via FeatureServer.

While still polishing it; but you can see the sneak preview:

by Stefan de Konink

Today I created a tiny bit of OpenLayers code for the Eyesis display page. It is basically a demo what you can do by playing points of interest on a map. Displaying the panorama and a smaller map.

Currently I did not add a panorama player yet. But since it is only a matter of changing div’s that could be done easily. Personally I would like to go for a HTML5 kind of player, since for most browsers that would be the least resource intensive way of displaying. The code is available at http://eyesis.openstreetphoto.org/ there are some images there but only lowres from the initial stichting tryouts.

by Stefan de Konink

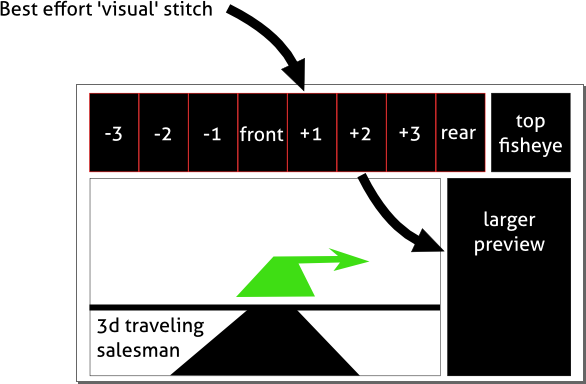

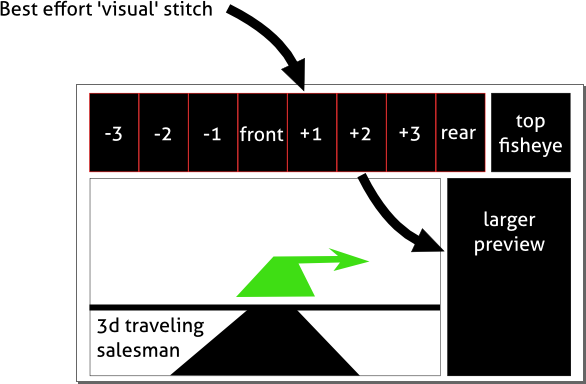

Tonight I was thinking about what the possibilities are for the driver to actually see what he is shooting and at the same time facilitate appliances such as traveling salesman routing for the fastest shooting experience. I have some other ideas for the ‘main’ screen such as a 2D map with trails where the car has been (or where photo’s were taken). It seems a logical decision to even incorporate a menu to start or stop shooting or see data statistics as well.

I would like to encourage others to come up with other appliances that could be ‘nice’ to have while drive and shooting. Let me give shot: ‘cute girl tag’-button.

by Stefan de Konink

A short update from the stitching team.

Jan Martin from diy-streetview.org gave his shot on the demo content in Hugin. This resulted in the following beautiful stitch.

Bruno Postle also send us his attempt on the new footage.

Wim Koornneef (dmmdh productions) created an online, interactive, panorama view.

by Stefan de Konink

With the first Eyesis content available I started to prototype the panoramic workflow. We would like to have a reference design to be available in such way that a camera can be used without custom development. For practical reasons I have started with the relative low-quality JPEG output. Like for all current Elphel camera’s JPEG, JP4 and JP46 modes exist. For framerate and quality the JP4 mode will be the optimal sweetspot, it gives a theoretical maximum of 5.31 fps (panorama), with host side debayer.

(more…)

by Olga Filippova

Elphel-Eyesis 1

On July 8, we have the first panoramic camera completely assembled and ready for the test ride. The total height is 1300 mm [4′ 3″]; it weighs 10 kg or about 22 lbs . The power consumption is 36W when camera is in operation, measured at the AC (110/220VAC) input. Camera head has eight 5 Mpix Color sensors around and one pointing up, with the full resolution of ~38 MPix (45 MPix before stitching). The data storage box (also waterproof) – at the bottom of the leg contains 3 swappable 2.5″ hard drives 500 GB each, which is enough to record up to 12 hours of images taken at 5 fps (max frame rate) at full resolution. Each image is geotagged via external GPS unit attached through the sealed USB connector.

The 8 high-resolution lenses are arranged very compact (distance between entrance pupils is 29.5mm), which allows for very small parallax. The high-res Fish-eye lens is pointed to the sky.

Camera head is 210mm [8.3″] in diameter , is waterproof, contains 3 Elphel 10353 processor boards and 3 Elphel 10369 extension boards, which provide IDE, SATA, USB, RS232, and other interfaces (only SATA, USB and sync I/Os are used in Eyesis configuration). Nine sensor boards (10338D) are connected through the three 10359A multiplexer boards that provide temporary storage for the images – all 3 sensors attached to the same 10359A board are triggered simultaneously, but data is transferred to the system boards one at a time. (more…)

We had nice New Year vacations (but so short, unfortunately) at Maple Grove Hot Springs, and between soaking in the nice hot pools I tried the emerging technology I never dealt with before – WebGL, a part of HTML-5 standard that gives you the power of the graphic cards 3-d capability in the browser, programed (mostly) in familiar javaScript. I first searched for the existent panoramas to see how they look like and how responsive they are to the mouse controls, but could not immediately find something working (at least on Firefox 4.0b8 that I just installed – you’ll need to enable “webgl.enabled_for_all_sites” in “about:config” if you would like to try it too). Then I found a nice tutorial with the examples working without a glitch and carefully read the first few lessons.

We had nice New Year vacations (but so short, unfortunately) at Maple Grove Hot Springs, and between soaking in the nice hot pools I tried the emerging technology I never dealt with before – WebGL, a part of HTML-5 standard that gives you the power of the graphic cards 3-d capability in the browser, programed (mostly) in familiar javaScript. I first searched for the existent panoramas to see how they look like and how responsive they are to the mouse controls, but could not immediately find something working (at least on Firefox 4.0b8 that I just installed – you’ll need to enable “webgl.enabled_for_all_sites” in “about:config” if you would like to try it too). Then I found a nice tutorial with the examples working without a glitch and carefully read the first few lessons.